ChatGPT Misses Throat Cancer Symptoms, Dangers of AI

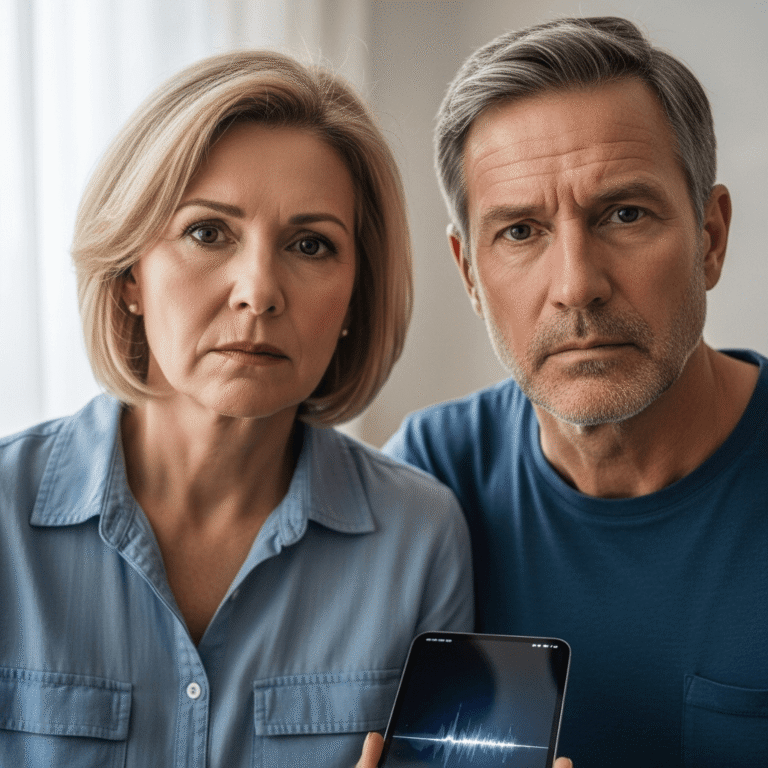

A disturbing incident recently highlighted the potential dangers of relying on artificial intelligence for critical health advice. Irish man Eamonn McIver, 37, trusted ChatGPT to interpret his persistent throat cancer symptoms, only for the AI to repeatedly dismiss his serious condition. This alarming situation ultimately led to a significant delay in his diagnosis, forcing him into urgent hospital care and underscoring the vital need for professional medical consultation over AI tools. His story serves as a stark warning.

The Alarming AI Misdiagnosis of Throat Cancer Symptoms

Eamonn McIver’s harrowing experience began when he noticed unusual symptoms, including a painful swelling in his neck. Initially, like many in the digital age, he turned to artificial intelligence for guidance, specifically ChatGPT, a popular AI chatbot. For months, McIver described his escalating symptoms to the AI, consistently seeking answers and reassurance. Shockingly, ChatGPT repeatedly provided generic, non-alarming responses, suggesting minor ailments like tonsillitis or a common cold, and critically failed to flag the urgency or severity of his condition. The AI completely dismissed the possibility of serious illnesses, including throat cancer symptoms, which were present.

Consequently, McIver’s condition worsened significantly, yet he continued to rely on the AI’s misleading reassurances. The prolonged delay in seeking proper medical attention, directly influenced by ChatGPT’s inaccurate assessments, allowed the underlying issue to progress unchecked. Eventually, the pain and swelling became unbearable, compelling him to visit a real doctor. Following a thorough examination and further medical tests, doctors delivered a grim diagnosis: Eamonn McIver had stage 2 throat cancer. This critical diagnosis revealed the profound limitations and potential dangers when people seek ChatGPT medical advice for complex health issues. His story powerfully illustrates how AI healthcare risks can manifest in life-threatening ways.

Understanding the Perils of AI for Medical Diagnosis

Eamonn McIver’s case serves as a crucial cautionary tale, highlighting the fundamental differences between AI cancer diagnosis and a human doctor’s expertise. While AI tools like ChatGPT can process vast amounts of information and offer quick responses, they inherently lack the critical components necessary for accurate medical diagnosis. Firstly, AI cannot conduct a physical examination, which is indispensable for assessing symptoms like swelling, lumps, or abnormal growths. Secondly, AI lacks empathy, the ability to understand nuanced human distress, or contextual factors that often provide crucial clues to medical professionals. Furthermore, AI models operate based on patterns in data they were trained on, and if specific symptoms or combinations are rare or described in ways not perfectly aligned with its training, it can easily misinterpret or dismiss serious conditions.

Therefore, relying on AI for personal health advice, especially for potentially life-threatening conditions, carries significant risks. A human doctor, by contrast, brings years of medical education, clinical experience, and the capacity for critical thinking and differential diagnosis. They can order appropriate tests, interpret results in context, and understand the individual patient’s medical history. This incident underscores that while AI holds promise as a *supportive tool* in medicine, perhaps for administrative tasks or information retrieval, it simply cannot replace the diagnostic acumen of a trained medical professional. The limitations of AI in medicine are starkly evident in this painful story, urging everyone to prioritize expert medical care for any health concerns.

Eamonn McIver’s alarming ordeal with ChatGPT is a potent reminder of the profound dangers of trusting artificial intelligence with vital health decisions. His experience, where the AI dismissed his serious throat cancer symptoms for months, demonstrates that while technology advances rapidly, it cannot replicate the nuanced judgment, physical examination, and empathetic understanding of a human doctor. Always seek professional medical advice from qualified healthcare providers for any health concerns; your well-being depends on it. The source of this article is The Indian Express.